Merge branch 'main' of github.com:ed-donner/llm_engineering

This commit is contained in:

16

community-contributions/Reputation_Radar/Dockerfile

Normal file

16

community-contributions/Reputation_Radar/Dockerfile

Normal file

@@ -0,0 +1,16 @@

|

|||||||

|

FROM python:3.11-slim

|

||||||

|

|

||||||

|

WORKDIR /app

|

||||||

|

|

||||||

|

COPY requirements.txt .

|

||||||

|

RUN pip install --no-cache-dir -r requirements.txt

|

||||||

|

|

||||||

|

COPY . .

|

||||||

|

|

||||||

|

ENV STREAMLIT_SERVER_HEADLESS=true \

|

||||||

|

STREAMLIT_SERVER_ADDRESS=0.0.0.0 \

|

||||||

|

STREAMLIT_SERVER_PORT=8501

|

||||||

|

|

||||||

|

EXPOSE 8501

|

||||||

|

|

||||||

|

CMD ["streamlit", "run", "app.py"]

|

||||||

13

community-contributions/Reputation_Radar/Makefile

Normal file

13

community-contributions/Reputation_Radar/Makefile

Normal file

@@ -0,0 +1,13 @@

|

|||||||

|

PYTHON ?= python

|

||||||

|

|

||||||

|

.PHONY: install run test

|

||||||

|

|

||||||

|

install:

|

||||||

|

$(PYTHON) -m pip install --upgrade pip

|

||||||

|

$(PYTHON) -m pip install -r requirements.txt

|

||||||

|

|

||||||

|

run:

|

||||||

|

streamlit run app.py

|

||||||

|

|

||||||

|

test:

|

||||||

|

pytest

|

||||||

124

community-contributions/Reputation_Radar/README.md

Normal file

124

community-contributions/Reputation_Radar/README.md

Normal file

@@ -0,0 +1,124 @@

|

|||||||

|

# 📡 ReputationRadar

|

||||||

|

> Real-time brand intelligence with human-readable insights.

|

||||||

|

|

||||||

|

ReputationRadar is a Streamlit dashboard that unifies Reddit, Twitter/X, and Trustpilot chatter, classifies sentiment with OpenAI (or VADER fallback), and delivers exportable executive summaries. It ships with modular services, caching, retry-aware scrapers, demo data, and pytest coverage—ready for production hardening or internal deployment.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Table of Contents

|

||||||

|

- [Demo](#demo)

|

||||||

|

- [Feature Highlights](#feature-highlights)

|

||||||

|

- [Architecture Overview](#architecture-overview)

|

||||||

|

- [Quick Start](#quick-start)

|

||||||

|

- [Configuration & Credentials](#configuration--credentials)

|

||||||

|

- [Running Tests](#running-tests)

|

||||||

|

- [Working Without API Keys](#working-without-api-keys)

|

||||||

|

- [Exports & Deliverables](#exports--deliverables)

|

||||||

|

- [Troubleshooting](#troubleshooting)

|

||||||

|

- [Legal & Compliance](#legal--compliance)

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

## Demo

|

||||||

|

|

||||||

|

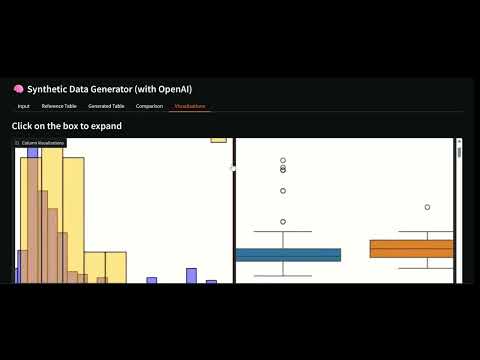

The video demo of the app can be found at:-

|

||||||

|

https://drive.google.com/file/d/1XZ09NOht1H5LCJEbOrAldny2L5SV1DeT/view?usp=sharing

|

||||||

|

|

||||||

|

|

||||||

|

## Feature Highlights

|

||||||

|

- **Adaptive Ingestion** – Toggle Reddit, Twitter/X, and Trustpilot independently; backoff, caching, and polite scraping keep providers happy.

|

||||||

|

- **Smart Sentiment** – Batch OpenAI classification with rationale-aware prompts and auto-fallback to VADER when credentials are missing.

|

||||||

|

- **Actionable Summaries** – Executive brief card (highlights, risks, tone, actions) plus refreshed PDF layout that respects margins and typography.

|

||||||

|

- **Interactive Insights** – Plotly visuals, per-source filtering, and a lean “Representative Mentions” link list to avoid content overload.

|

||||||

|

- **Export Suite** – CSV, Excel (auto-sized columns), and polished PDF snapshots for stakeholder handoffs.

|

||||||

|

- **Robust Foundation** – Structured logging, reusable UI components, pytest suites, Dockerfile, and Makefile for frictionless iteration.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Architecture Overview

|

||||||

|

```

|

||||||

|

community-contributions/Reputation_Radar/

|

||||||

|

├── app.py # Streamlit orchestrator & layout

|

||||||

|

├── components/ # Sidebar, dashboard, summaries, loaders

|

||||||

|

├── services/ # Reddit/Twitter clients, Trustpilot scraper, LLM wrapper, utilities

|

||||||

|

├── samples/ # Demo JSON payloads (auto-loaded when credentials missing)

|

||||||

|

├── tests/ # Pytest coverage for utilities and LLM fallback

|

||||||

|

├── assets/ # Placeholder icons/logo

|

||||||

|

├── logs/ # Streaming log output

|

||||||

|

├── requirements.txt # Runtime dependencies (includes PDF + Excel writers)

|

||||||

|

├── Dockerfile # Containerised deployment recipe

|

||||||

|

└── Makefile # Helper targets for install/run/test

|

||||||

|

```

|

||||||

|

Each service returns a normalised payload to keep the downstream sentiment pipeline deterministic. Deduplication is handled centrally via fuzzy matching, and timestamps are coerced to UTC before analysis.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Quick Start

|

||||||

|

1. **Clone & enter the project directory (`community-contributions/Reputation_Radar`).**

|

||||||

|

2. **Install dependencies and launch Streamlit:**

|

||||||

|

```bash

|

||||||

|

pip install -r requirements.txt && streamlit run app.py

|

||||||

|

```

|

||||||

|

(Use a virtual environment if preferred.)

|

||||||

|

3. **Populate the sidebar:** add your brand name, optional filters, toggled sources, and API credentials (stored only in session state).

|

||||||

|

4. **Click “Run Analysis 🚀”** – follow the status indicators as sources load, sentiment processes, and summaries render.

|

||||||

|

|

||||||

|

### Optional Docker Run

|

||||||

|

```bash

|

||||||

|

docker build -t reputation-radar .

|

||||||

|

docker run --rm -p 8501:8501 -e OPENAI_API_KEY=your_key reputation-radar

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Configuration & Credentials

|

||||||

|

The app reads from `.env`, Streamlit secrets, or direct sidebar input. Expected variables:

|

||||||

|

|

||||||

|

| Variable | Purpose |

|

||||||

|

| --- | --- |

|

||||||

|

| `OPENAI_API_KEY` | Enables OpenAI sentiment + executive summary (falls back to VADER if absent). |

|

||||||

|

| `REDDIT_CLIENT_ID` | PRAW client ID for Reddit API access. |

|

||||||

|

| `REDDIT_CLIENT_SECRET` | PRAW client secret. |

|

||||||

|

| `REDDIT_USER_AGENT` | Descriptive user agent (e.g., `ReputationRadar/1.0 by you`). |

|

||||||

|

| `TWITTER_BEARER_TOKEN` | Twitter/X v2 recent search bearer token. |

|

||||||

|

|

||||||

|

Credential validation mirrors the guidance from `week1/day1.ipynb`—mistyped OpenAI keys surface helpful warnings before analysis begins.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Running Tests

|

||||||

|

```bash

|

||||||

|

pytest

|

||||||

|

```

|

||||||

|

Tests cover sentiment fallback behaviour and core sanitisation/deduplication helpers. Extend them as you add new data transforms or UI logic.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Working Without API Keys

|

||||||

|

- Reddit/Twitter/Trustpilot can be toggled independently; missing credentials raise gentle warnings rather than hard failures.

|

||||||

|

- Curated fixtures in `samples/` automatically load for any disabled source, keeping charts, exports, and PDF output functional in demo mode.

|

||||||

|

- The LLM layer drops to VADER sentiment scoring and skips the executive summary when `OPENAI_API_KEY` is absent.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Exports & Deliverables

|

||||||

|

- **CSV** – Clean, UTF-8 dataset for quick spreadsheet edits.

|

||||||

|

- **Excel** – Auto-sized columns, formatted timestamps, instantaneous import into stakeholder workbooks.

|

||||||

|

- **PDF** – Professionally typeset executive summary with bullet lists, consistent margins, and wrapped excerpts (thanks to ReportLab’s Platypus engine).

|

||||||

|

|

||||||

|

All exports are regenerated on demand and never persisted server-side.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Troubleshooting

|

||||||

|

- **OpenAI key missing/invalid** – Watch the sidebar notices; the app falls back gracefully but no executive summary will be produced.

|

||||||

|

- **Twitter 401/403** – Confirm your bearer token scope and that the project has search access enabled.

|

||||||

|

- **Rate limiting (429)** – Built-in sleeps help, but repeated requests may require manual pauses. Try narrowing filters or reducing per-source limits.

|

||||||

|

- **Trustpilot blocks** – Respect robots.txt. If scraping is denied, switch to the official API or provide compliant CSV imports.

|

||||||

|

- **PDF text clipping** – Resolved by the new layout; if you customise templates ensure col widths/table styles remain inside page margins.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Legal & Compliance

|

||||||

|

ReputationRadar surfaces public discourse for legitimate monitoring purposes. Always comply with each platform’s Terms of Service, local regulations, and privacy expectations. Avoid storing third-party data longer than necessary, and never commit API keys to version control—the app only keeps them in Streamlit session state.

|

||||||

436

community-contributions/Reputation_Radar/app.py

Normal file

436

community-contributions/Reputation_Radar/app.py

Normal file

@@ -0,0 +1,436 @@

|

|||||||

|

"""ReputationRadar Streamlit application entrypoint."""

|

||||||

|

|

||||||

|

from __future__ import annotations

|

||||||

|

|

||||||

|

import io

|

||||||

|

import json

|

||||||

|

import os

|

||||||

|

import re

|

||||||

|

from datetime import datetime

|

||||||

|

from typing import Dict, List, Optional

|

||||||

|

|

||||||

|

import pandas as pd

|

||||||

|

import streamlit as st

|

||||||

|

from dotenv import load_dotenv

|

||||||

|

from reportlab.lib import colors

|

||||||

|

from reportlab.lib.pagesizes import letter

|

||||||

|

from reportlab.lib.styles import ParagraphStyle, getSampleStyleSheet

|

||||||

|

from reportlab.platypus import Paragraph, SimpleDocTemplate, Spacer, Table, TableStyle

|

||||||

|

|

||||||

|

from components.dashboard import render_overview, render_source_explorer, render_top_comments

|

||||||

|

from components.filters import render_sidebar

|

||||||

|

from components.summary import render_summary

|

||||||

|

from components.loaders import show_empty_state, source_status

|

||||||

|

from services import llm, reddit_client, trustpilot_scraper, twitter_client, utils

|

||||||

|

from services.llm import SentimentResult

|

||||||

|

from services.utils import (

|

||||||

|

NormalizedItem,

|

||||||

|

ServiceError,

|

||||||

|

ServiceWarning,

|

||||||

|

initialize_logger,

|

||||||

|

load_sample_items,

|

||||||

|

normalize_items,

|

||||||

|

parse_date_range,

|

||||||

|

validate_openai_key,

|

||||||

|

)

|

||||||

|

|

||||||

|

|

||||||

|

st.set_page_config(page_title="ReputationRadar", page_icon="📡", layout="wide")

|

||||||

|

load_dotenv(override=True)

|

||||||

|

LOGGER = initialize_logger()

|

||||||

|

|

||||||

|

st.title("📡 ReputationRadar")

|

||||||

|

st.caption("Aggregate brand chatter, classify sentiment, and surface actionable insights in minutes.")

|

||||||

|

|

||||||

|

|

||||||

|

def _get_env_defaults() -> Dict[str, Optional[str]]:

|

||||||

|

"""Read supported credentials from environment variables."""

|

||||||

|

return {

|

||||||

|

"OPENAI_API_KEY": os.getenv("OPENAI_API_KEY"),

|

||||||

|

"REDDIT_CLIENT_ID": os.getenv("REDDIT_CLIENT_ID"),

|

||||||

|

"REDDIT_CLIENT_SECRET": os.getenv("REDDIT_CLIENT_SECRET"),

|

||||||

|

"REDDIT_USER_AGENT": os.getenv("REDDIT_USER_AGENT", "ReputationRadar/1.0"),

|

||||||

|

"TWITTER_BEARER_TOKEN": os.getenv("TWITTER_BEARER_TOKEN"),

|

||||||

|

}

|

||||||

|

|

||||||

|

|

||||||

|

@st.cache_data(ttl=600, show_spinner=False)

|

||||||

|

def cached_reddit_fetch(

|

||||||

|

brand: str,

|

||||||

|

limit: int,

|

||||||

|

date_range: str,

|

||||||

|

min_upvotes: int,

|

||||||

|

client_id: str,

|

||||||

|

client_secret: str,

|

||||||

|

user_agent: str,

|

||||||

|

) -> List[NormalizedItem]:

|

||||||

|

credentials = {

|

||||||

|

"client_id": client_id,

|

||||||

|

"client_secret": client_secret,

|

||||||

|

"user_agent": user_agent,

|

||||||

|

}

|

||||||

|

return reddit_client.fetch_mentions(

|

||||||

|

brand=brand,

|

||||||

|

credentials=credentials,

|

||||||

|

limit=limit,

|

||||||

|

date_filter=date_range,

|

||||||

|

min_upvotes=min_upvotes,

|

||||||

|

)

|

||||||

|

|

||||||

|

|

||||||

|

@st.cache_data(ttl=600, show_spinner=False)

|

||||||

|

def cached_twitter_fetch(

|

||||||

|

brand: str,

|

||||||

|

limit: int,

|

||||||

|

min_likes: int,

|

||||||

|

language: str,

|

||||||

|

bearer: str,

|

||||||

|

) -> List[NormalizedItem]:

|

||||||

|

return twitter_client.fetch_mentions(

|

||||||

|

brand=brand,

|

||||||

|

bearer_token=bearer,

|

||||||

|

limit=limit,

|

||||||

|

min_likes=min_likes,

|

||||||

|

language=language,

|

||||||

|

)

|

||||||

|

|

||||||

|

|

||||||

|

@st.cache_data(ttl=600, show_spinner=False)

|

||||||

|

def cached_trustpilot_fetch(

|

||||||

|

brand: str,

|

||||||

|

language: str,

|

||||||

|

pages: int = 2,

|

||||||

|

) -> List[NormalizedItem]:

|

||||||

|

return trustpilot_scraper.fetch_reviews(brand=brand, language=language, pages=pages)

|

||||||

|

|

||||||

|

|

||||||

|

def _to_dataframe(items: List[NormalizedItem], sentiments: List[SentimentResult]) -> pd.DataFrame:

|

||||||

|

data = []

|

||||||

|

for item, sentiment in zip(items, sentiments):

|

||||||

|

data.append(

|

||||||

|

{

|

||||||

|

"source": item["source"],

|

||||||

|

"id": item["id"],

|

||||||

|

"url": item.get("url"),

|

||||||

|

"author": item.get("author"),

|

||||||

|

"timestamp": item["timestamp"],

|

||||||

|

"text": item["text"],

|

||||||

|

"label": sentiment.label,

|

||||||

|

"confidence": sentiment.confidence,

|

||||||

|

"meta": json.dumps(item.get("meta", {})),

|

||||||

|

}

|

||||||

|

)

|

||||||

|

df = pd.DataFrame(data)

|

||||||

|

if not df.empty:

|

||||||

|

df["timestamp"] = pd.to_datetime(df["timestamp"])

|

||||||

|

return df

|

||||||

|

|

||||||

|

|

||||||

|

def _build_pdf(summary: Optional[Dict[str, str]], df: pd.DataFrame) -> bytes:

|

||||||

|

buffer = io.BytesIO()

|

||||||

|

doc = SimpleDocTemplate(

|

||||||

|

buffer,

|

||||||

|

pagesize=letter,

|

||||||

|

rightMargin=40,

|

||||||

|

leftMargin=40,

|

||||||

|

topMargin=60,

|

||||||

|

bottomMargin=40,

|

||||||

|

title="ReputationRadar Executive Summary",

|

||||||

|

)

|

||||||

|

styles = getSampleStyleSheet()

|

||||||

|

title_style = styles["Title"]

|

||||||

|

subtitle_style = ParagraphStyle(

|

||||||

|

"Subtitle",

|

||||||

|

parent=styles["BodyText"],

|

||||||

|

fontSize=10,

|

||||||

|

leading=14,

|

||||||

|

textColor="#555555",

|

||||||

|

)

|

||||||

|

body_style = ParagraphStyle(

|

||||||

|

"Body",

|

||||||

|

parent=styles["BodyText"],

|

||||||

|

leading=14,

|

||||||

|

fontSize=11,

|

||||||

|

)

|

||||||

|

bullet_style = ParagraphStyle(

|

||||||

|

"Bullet",

|

||||||

|

parent=body_style,

|

||||||

|

leftIndent=16,

|

||||||

|

bulletIndent=8,

|

||||||

|

spaceBefore=2,

|

||||||

|

spaceAfter=2,

|

||||||

|

)

|

||||||

|

heading_style = ParagraphStyle(

|

||||||

|

"SectionHeading",

|

||||||

|

parent=styles["Heading3"],

|

||||||

|

spaceBefore=10,

|

||||||

|

spaceAfter=6,

|

||||||

|

)

|

||||||

|

|

||||||

|

story: List[Paragraph | Spacer | Table] = []

|

||||||

|

story.append(Paragraph("ReputationRadar Executive Summary", title_style))

|

||||||

|

story.append(Spacer(1, 6))

|

||||||

|

story.append(

|

||||||

|

Paragraph(

|

||||||

|

f"Generated on: {datetime.utcnow().strftime('%Y-%m-%d %H:%M')} UTC",

|

||||||

|

subtitle_style,

|

||||||

|

)

|

||||||

|

)

|

||||||

|

story.append(Spacer(1, 18))

|

||||||

|

|

||||||

|

if summary and summary.get("raw"):

|

||||||

|

story.extend(_summary_to_story(summary["raw"], body_style, bullet_style, heading_style))

|

||||||

|

else:

|

||||||

|

story.append(

|

||||||

|

Paragraph(

|

||||||

|

"Executive summary disabled (OpenAI key missing).",

|

||||||

|

body_style,

|

||||||

|

)

|

||||||

|

)

|

||||||

|

story.append(Spacer(1, 16))

|

||||||

|

story.append(Paragraph("Sentiment Snapshot", styles["Heading2"]))

|

||||||

|

story.append(Spacer(1, 10))

|

||||||

|

|

||||||

|

table_data: List[List[Paragraph]] = [

|

||||||

|

[

|

||||||

|

Paragraph("Date", body_style),

|

||||||

|

Paragraph("Sentiment", body_style),

|

||||||

|

Paragraph("Source", body_style),

|

||||||

|

Paragraph("Excerpt", body_style),

|

||||||

|

]

|

||||||

|

]

|

||||||

|

snapshot = df.sort_values("timestamp", ascending=False).head(15)

|

||||||

|

for _, row in snapshot.iterrows():

|

||||||

|

excerpt = _truncate_text(row["text"], 180)

|

||||||

|

table_data.append(

|

||||||

|

[

|

||||||

|

Paragraph(row["timestamp"].strftime("%Y-%m-%d %H:%M"), body_style),

|

||||||

|

Paragraph(row["label"].title(), body_style),

|

||||||

|

Paragraph(row["source"].title(), body_style),

|

||||||

|

Paragraph(excerpt, body_style),

|

||||||

|

]

|

||||||

|

)

|

||||||

|

|

||||||

|

table = Table(table_data, colWidths=[90, 70, 80, 250])

|

||||||

|

table.setStyle(

|

||||||

|

TableStyle(

|

||||||

|

[

|

||||||

|

("BACKGROUND", (0, 0), (-1, 0), colors.HexColor("#f3f4f6")),

|

||||||

|

("TEXTCOLOR", (0, 0), (-1, 0), colors.HexColor("#1f2937")),

|

||||||

|

("FONTNAME", (0, 0), (-1, 0), "Helvetica-Bold"),

|

||||||

|

("ALIGN", (0, 0), (-1, -1), "LEFT"),

|

||||||

|

("VALIGN", (0, 0), (-1, -1), "TOP"),

|

||||||

|

("INNERGRID", (0, 0), (-1, -1), 0.25, colors.HexColor("#d1d5db")),

|

||||||

|

("BOX", (0, 0), (-1, -1), 0.5, colors.HexColor("#9ca3af")),

|

||||||

|

("ROWBACKGROUNDS", (0, 1), (-1, -1), [colors.white, colors.HexColor("#f9fafb")]),

|

||||||

|

]

|

||||||

|

)

|

||||||

|

)

|

||||||

|

story.append(table)

|

||||||

|

|

||||||

|

doc.build(story)

|

||||||

|

buffer.seek(0)

|

||||||

|

return buffer.getvalue()

|

||||||

|

|

||||||

|

|

||||||

|

def _summary_to_story(

|

||||||

|

raw_summary: str,

|

||||||

|

body_style: ParagraphStyle,

|

||||||

|

bullet_style: ParagraphStyle,

|

||||||

|

heading_style: ParagraphStyle,

|

||||||

|

) -> List[Paragraph | Spacer]:

|

||||||

|

story: List[Paragraph | Spacer] = []

|

||||||

|

lines = [line.strip() for line in raw_summary.splitlines()]

|

||||||

|

for line in lines:

|

||||||

|

if not line:

|

||||||

|

continue

|

||||||

|

clean = re.sub(r"\*\*(.*?)\*\*", r"\1", line)

|

||||||

|

if clean.endswith(":") and len(clean) < 40:

|

||||||

|

story.append(Paragraph(clean.rstrip(":"), heading_style))

|

||||||

|

continue

|

||||||

|

if clean.lower().startswith(("highlights", "risks & concerns", "recommended actions", "overall tone")):

|

||||||

|

story.append(Paragraph(clean, heading_style))

|

||||||

|

continue

|

||||||

|

if line.startswith(("-", "*")):

|

||||||

|

bullet_text = re.sub(r"\*\*(.*?)\*\*", r"\1", line[1:].strip())

|

||||||

|

story.append(Paragraph(bullet_text, bullet_style, bulletText="•"))

|

||||||

|

else:

|

||||||

|

story.append(Paragraph(clean, body_style))

|

||||||

|

story.append(Spacer(1, 10))

|

||||||

|

return story

|

||||||

|

|

||||||

|

|

||||||

|

def _truncate_text(text: str, max_length: int) -> str:

|

||||||

|

clean = re.sub(r"\s+", " ", text).strip()

|

||||||

|

if len(clean) <= max_length:

|

||||||

|

return clean

|

||||||

|

return clean[: max_length - 1].rstrip() + "…"

|

||||||

|

|

||||||

|

|

||||||

|

def _build_excel(df: pd.DataFrame) -> bytes:

|

||||||

|

buffer = io.BytesIO()

|

||||||

|

export_df = df.copy()

|

||||||

|

export_df["timestamp"] = export_df["timestamp"].dt.strftime("%Y-%m-%d %H:%M")

|

||||||

|

with pd.ExcelWriter(buffer, engine="xlsxwriter") as writer:

|

||||||

|

export_df.to_excel(writer, index=False, sheet_name="Mentions")

|

||||||

|

worksheet = writer.sheets["Mentions"]

|

||||||

|

for idx, column in enumerate(export_df.columns):

|

||||||

|

series = export_df[column].astype(str)

|

||||||

|

max_len = min(60, max(series.map(len).max(), len(column)) + 2)

|

||||||

|

worksheet.set_column(idx, idx, max_len)

|

||||||

|

buffer.seek(0)

|

||||||

|

return buffer.getvalue()

|

||||||

|

|

||||||

|

|

||||||

|

def main() -> None:

|

||||||

|

env_defaults = _get_env_defaults()

|

||||||

|

openai_env_key = env_defaults.get("OPENAI_API_KEY") or st.session_state.get("secrets", {}).get("OPENAI_API_KEY")

|

||||||

|

validated_env_key, notices = validate_openai_key(openai_env_key)

|

||||||

|

config = render_sidebar(env_defaults, tuple(notices))

|

||||||

|

|

||||||

|

chosen_key = config["credentials"]["openai"] or validated_env_key

|

||||||

|

openai_key, runtime_notices = validate_openai_key(chosen_key)

|

||||||

|

for msg in runtime_notices:

|

||||||

|

st.sidebar.info(msg)

|

||||||

|

|

||||||

|

run_clicked = st.button("Run Analysis 🚀", type="primary")

|

||||||

|

|

||||||

|

if not run_clicked:

|

||||||

|

show_empty_state("Enter a brand name and click **Run Analysis** to get started.")

|

||||||

|

return

|

||||||

|

|

||||||

|

if not config["brand"]:

|

||||||

|

st.error("Brand name is required.")

|

||||||

|

return

|

||||||

|

|

||||||

|

threshold = parse_date_range(config["date_range"])

|

||||||

|

collected: List[NormalizedItem] = []

|

||||||

|

|

||||||

|

with st.container():

|

||||||

|

if config["sources"]["reddit"]:

|

||||||

|

with source_status("Fetching Reddit mentions") as status:

|

||||||

|

try:

|

||||||

|

reddit_items = cached_reddit_fetch(

|

||||||

|

brand=config["brand"],

|

||||||

|

limit=config["limits"]["reddit"],

|

||||||

|

date_range=config["date_range"],

|

||||||

|

min_upvotes=config["min_reddit_upvotes"],

|

||||||

|

client_id=config["credentials"]["reddit"]["client_id"],

|

||||||

|

client_secret=config["credentials"]["reddit"]["client_secret"],

|

||||||

|

user_agent=config["credentials"]["reddit"]["user_agent"],

|

||||||

|

)

|

||||||

|

reddit_items = [item for item in reddit_items if item["timestamp"] >= threshold]

|

||||||

|

status.write(f"Fetched {len(reddit_items)} Reddit items.")

|

||||||

|

collected.extend(reddit_items)

|

||||||

|

except ServiceWarning as warning:

|

||||||

|

st.warning(str(warning))

|

||||||

|

demo = load_sample_items("reddit_sample")

|

||||||

|

if demo:

|

||||||

|

st.info("Loaded demo Reddit data.", icon="🧪")

|

||||||

|

collected.extend(demo)

|

||||||

|

except ServiceError as error:

|

||||||

|

st.error(f"Reddit fetch failed: {error}")

|

||||||

|

if config["sources"]["twitter"]:

|

||||||

|

with source_status("Fetching Twitter mentions") as status:

|

||||||

|

try:

|

||||||

|

twitter_items = cached_twitter_fetch(

|

||||||

|

brand=config["brand"],

|

||||||

|

limit=config["limits"]["twitter"],

|

||||||

|

min_likes=config["min_twitter_likes"],

|

||||||

|

language=config["language"],

|

||||||

|

bearer=config["credentials"]["twitter"],

|

||||||

|

)

|

||||||

|

twitter_items = [item for item in twitter_items if item["timestamp"] >= threshold]

|

||||||

|

status.write(f"Fetched {len(twitter_items)} tweets.")

|

||||||

|

collected.extend(twitter_items)

|

||||||

|

except ServiceWarning as warning:

|

||||||

|

st.warning(str(warning))

|

||||||

|

demo = load_sample_items("twitter_sample")

|

||||||

|

if demo:

|

||||||

|

st.info("Loaded demo Twitter data.", icon="🧪")

|

||||||

|

collected.extend(demo)

|

||||||

|

except ServiceError as error:

|

||||||

|

st.error(f"Twitter fetch failed: {error}")

|

||||||

|

if config["sources"]["trustpilot"]:

|

||||||

|

with source_status("Fetching Trustpilot reviews") as status:

|

||||||

|

try:

|

||||||

|

trustpilot_items = cached_trustpilot_fetch(

|

||||||

|

brand=config["brand"],

|

||||||

|

language=config["language"],

|

||||||

|

)

|

||||||

|

trustpilot_items = [item for item in trustpilot_items if item["timestamp"] >= threshold]

|

||||||

|

status.write(f"Fetched {len(trustpilot_items)} reviews.")

|

||||||

|

collected.extend(trustpilot_items)

|

||||||

|

except ServiceWarning as warning:

|

||||||

|

st.warning(str(warning))

|

||||||

|

demo = load_sample_items("trustpilot_sample")

|

||||||

|

if demo:

|

||||||

|

st.info("Loaded demo Trustpilot data.", icon="🧪")

|

||||||

|

collected.extend(demo)

|

||||||

|

except ServiceError as error:

|

||||||

|

st.error(f"Trustpilot fetch failed: {error}")

|

||||||

|

|

||||||

|

if not collected:

|

||||||

|

show_empty_state("No mentions found. Try enabling more sources or loosening filters.")

|

||||||

|

return

|

||||||

|

|

||||||

|

cleaned = normalize_items(collected)

|

||||||

|

if not cleaned:

|

||||||

|

show_empty_state("All results were filtered out as noise. Try again with different settings.")

|

||||||

|

return

|

||||||

|

|

||||||

|

sentiment_service = llm.LLMService(

|

||||||

|

api_key=config["credentials"]["openai"] or openai_key,

|

||||||

|

batch_size=config["batch_size"],

|

||||||

|

)

|

||||||

|

sentiments = sentiment_service.classify_sentiment_batch([item["text"] for item in cleaned])

|

||||||

|

df = _to_dataframe(cleaned, sentiments)

|

||||||

|

|

||||||

|

render_overview(df)

|

||||||

|

render_top_comments(df)

|

||||||

|

|

||||||

|

summary_payload: Optional[Dict[str, str]] = None

|

||||||

|

if sentiment_service.available():

|

||||||

|

try:

|

||||||

|

summary_payload = sentiment_service.summarize_overall(

|

||||||

|

[{"label": row["label"], "text": row["text"]} for _, row in df.iterrows()]

|

||||||

|

)

|

||||||

|

except ServiceWarning as warning:

|

||||||

|

st.warning(str(warning))

|

||||||

|

else:

|

||||||

|

st.info("OpenAI key missing. Using VADER fallback for sentiment; summary disabled.", icon="ℹ️")

|

||||||

|

|

||||||

|

render_summary(summary_payload)

|

||||||

|

render_source_explorer(df)

|

||||||

|

|

||||||

|

csv_data = df.to_csv(index=False).encode("utf-8")

|

||||||

|

excel_data = _build_excel(df)

|

||||||

|

pdf_data = _build_pdf(summary_payload, df)

|

||||||

|

col_csv, col_excel, col_pdf = st.columns(3)

|

||||||

|

with col_csv:

|

||||||

|

st.download_button(

|

||||||

|

"⬇️ Export CSV",

|

||||||

|

data=csv_data,

|

||||||

|

file_name="reputation_radar.csv",

|

||||||

|

mime="text/csv",

|

||||||

|

)

|

||||||

|

with col_excel:

|

||||||

|

st.download_button(

|

||||||

|

"⬇️ Export Excel",

|

||||||

|

data=excel_data,

|

||||||

|

file_name="reputation_radar.xlsx",

|

||||||

|

mime="application/vnd.openxmlformats-officedocument.spreadsheetml.sheet",

|

||||||

|

)

|

||||||

|

with col_pdf:

|

||||||

|

st.download_button(

|

||||||

|

"⬇️ Export PDF Summary",

|

||||||

|

data=pdf_data,

|

||||||

|

file_name="reputation_radar_summary.pdf",

|

||||||

|

mime="application/pdf",

|

||||||

|

)

|

||||||

|

|

||||||

|

st.success("Analysis complete! Review the insights above.")

|

||||||

|

|

||||||

|

|

||||||

|

if __name__ == "__main__":

|

||||||

|

main()

|

||||||

@@ -0,0 +1,5 @@

|

|||||||

|

"""Reusable Streamlit UI components for ReputationRadar."""

|

||||||

|

|

||||||

|

from . import dashboard, filters, loaders, summary

|

||||||

|

|

||||||

|

__all__ = ["dashboard", "filters", "loaders", "summary"]

|

||||||

136

community-contributions/Reputation_Radar/components/dashboard.py

Normal file

136

community-contributions/Reputation_Radar/components/dashboard.py

Normal file

@@ -0,0 +1,136 @@

|

|||||||

|

"""Render the ReputationRadar dashboard components."""

|

||||||

|

|

||||||

|

from __future__ import annotations

|

||||||

|

|

||||||

|

from typing import Dict, Optional

|

||||||

|

|

||||||

|

import pandas as pd

|

||||||

|

import plotly.express as px

|

||||||

|

import streamlit as st

|

||||||

|

|

||||||

|

SOURCE_CHIPS = {

|

||||||

|

"reddit": "🔺 Reddit",

|

||||||

|

"twitter": "✖️ Twitter",

|

||||||

|

"trustpilot": "⭐ Trustpilot",

|

||||||

|

}

|

||||||

|

|

||||||

|

SENTIMENT_COLORS = {

|

||||||

|

"positive": "#4caf50",

|

||||||

|

"neutral": "#90a4ae",

|

||||||

|

"negative": "#ef5350",

|

||||||

|

}

|

||||||

|

|

||||||

|

|

||||||

|

def render_overview(df: pd.DataFrame) -> None:

|

||||||

|

"""Display charts summarising sentiment."""

|

||||||

|

counts = (

|

||||||

|

df["label"]

|

||||||

|

.value_counts()

|

||||||

|

.reindex(["positive", "neutral", "negative"], fill_value=0)

|

||||||

|

.rename_axis("label")

|

||||||

|

.reset_index(name="count")

|

||||||

|

)

|

||||||

|

pie = px.pie(

|

||||||

|

counts,

|

||||||

|

names="label",

|

||||||

|

values="count",

|

||||||

|

color="label",

|

||||||

|

color_discrete_map=SENTIMENT_COLORS,

|

||||||

|

title="Sentiment distribution",

|

||||||

|

)

|

||||||

|

pie.update_traces(textinfo="percent+label")

|

||||||

|

|

||||||

|

ts = (

|

||||||

|

df.set_index("timestamp")

|

||||||

|

.groupby([pd.Grouper(freq="D"), "label"])

|

||||||

|

.size()

|

||||||

|

.reset_index(name="count")

|

||||||

|

)

|

||||||

|

if not ts.empty:

|

||||||

|

ts_plot = px.line(

|

||||||

|

ts,

|

||||||

|

x="timestamp",

|

||||||

|

y="count",

|

||||||

|

color="label",

|

||||||

|

color_discrete_map=SENTIMENT_COLORS,

|

||||||

|

markers=True,

|

||||||

|

title="Mentions over time",

|

||||||

|

)

|

||||||

|

else:

|

||||||

|

ts_plot = None

|

||||||

|

|

||||||

|

col1, col2 = st.columns(2)

|

||||||

|

with col1:

|

||||||

|

st.plotly_chart(pie, use_container_width=True)

|

||||||

|

with col2:

|

||||||

|

if ts_plot is not None:

|

||||||

|

st.plotly_chart(ts_plot, use_container_width=True)

|

||||||

|

else:

|

||||||

|

st.info("Not enough data for a time-series. Try widening the date range.", icon="📆")

|

||||||

|

|

||||||

|

|

||||||

|

def render_top_comments(df: pd.DataFrame) -> None:

|

||||||

|

"""Show representative comments per sentiment."""

|

||||||

|

st.subheader("Representative Mentions")

|

||||||

|

cols = st.columns(3)

|

||||||

|

for idx, sentiment in enumerate(["positive", "neutral", "negative"]):

|

||||||

|

subset = (

|

||||||

|

df[df["label"] == sentiment]

|

||||||

|

.sort_values("confidence", ascending=False)

|

||||||

|

.head(5)

|

||||||

|

)

|

||||||

|

with cols[idx]:

|

||||||

|

st.caption(sentiment.capitalize())

|

||||||

|

if subset.empty:

|

||||||

|

st.write("No items yet.")

|

||||||

|

continue

|

||||||

|

for _, row in subset.iterrows():

|

||||||

|

chip = SOURCE_CHIPS.get(row["source"], row["source"])

|

||||||

|

author = row.get("author") or "Unknown"

|

||||||

|

timestamp = row["timestamp"].strftime("%Y-%m-%d %H:%M")

|

||||||

|

label = f"{chip} · {author} · {timestamp}"

|

||||||

|

if row.get("url"):

|

||||||

|

st.markdown(f"- [{label}]({row['url']})")

|

||||||

|

else:

|

||||||

|

st.markdown(f"- {label}")

|

||||||

|

|

||||||

|

|

||||||

|

def render_source_explorer(df: pd.DataFrame) -> None:

|

||||||

|

"""Interactive tabular explorer with pagination and filters."""

|

||||||

|

with st.expander("Source Explorer", expanded=False):

|

||||||

|

search_term = st.text_input("Search mentions", key="explorer_search")

|

||||||

|

selected_source = st.selectbox("Source filter", options=["All"] + list(SOURCE_CHIPS.values()))

|

||||||

|

min_conf = st.slider("Minimum confidence", min_value=0.0, max_value=1.0, value=0.0, step=0.1)

|

||||||

|

|

||||||

|

filtered = df.copy()

|

||||||

|

if search_term:

|

||||||

|

filtered = filtered[filtered["text"].str.contains(search_term, case=False, na=False)]

|

||||||

|

if selected_source != "All":

|

||||||

|

source_key = _reverse_lookup(selected_source)

|

||||||

|

if source_key:

|

||||||

|

filtered = filtered[filtered["source"] == source_key]

|

||||||

|

filtered = filtered[filtered["confidence"] >= min_conf]

|

||||||

|

|

||||||

|

if filtered.empty:

|

||||||

|

st.info("No results found. Try widening the date range or removing filters.", icon="🪄")

|

||||||

|

return

|

||||||

|

|

||||||

|

page_size = 10

|

||||||

|

total_pages = max(1, (len(filtered) + page_size - 1) // page_size)

|

||||||

|

page = st.number_input("Page", min_value=1, max_value=total_pages, value=1)

|

||||||

|

start = (page - 1) * page_size

|

||||||

|

end = start + page_size

|

||||||

|

|

||||||

|

explorer_df = filtered.iloc[start:end].copy()

|

||||||

|

explorer_df["source"] = explorer_df["source"].map(SOURCE_CHIPS).fillna(explorer_df["source"])

|

||||||

|

explorer_df["timestamp"] = explorer_df["timestamp"].dt.strftime("%Y-%m-%d %H:%M")

|

||||||

|

explorer_df = explorer_df[["timestamp", "source", "author", "label", "confidence", "text", "url"]]

|

||||||

|

|

||||||

|

st.dataframe(explorer_df, use_container_width=True, hide_index=True)

|

||||||

|

|

||||||

|

|

||||||

|

def _reverse_lookup(value: str) -> Optional[str]:

|

||||||

|

for key, chip in SOURCE_CHIPS.items():

|

||||||

|

if chip == value:

|

||||||

|

return key

|

||||||

|

return None

|

||||||

128

community-contributions/Reputation_Radar/components/filters.py

Normal file

128

community-contributions/Reputation_Radar/components/filters.py

Normal file

@@ -0,0 +1,128 @@

|

|||||||

|

"""Sidebar filters and configuration controls."""

|

||||||

|

|

||||||

|

from __future__ import annotations

|

||||||

|

|

||||||

|

from typing import Dict, Optional, Tuple

|

||||||

|

|

||||||

|

import streamlit as st

|

||||||

|

|

||||||

|

DATE_RANGE_LABELS = {

|

||||||

|

"24h": "Last 24 hours",

|

||||||

|

"7d": "Last 7 days",

|

||||||

|

"30d": "Last 30 days",

|

||||||

|

}

|

||||||

|

|

||||||

|

SUPPORTED_LANGUAGES = {

|

||||||

|

"en": "English",

|

||||||

|

"es": "Spanish",

|

||||||

|

"de": "German",

|

||||||

|

"fr": "French",

|

||||||

|

}

|

||||||

|

|

||||||

|

|

||||||

|

def _store_secret(key: str, value: str) -> None:

|

||||||

|

"""Persist sensitive values in session state only."""

|

||||||

|

if value:

|

||||||

|

st.session_state.setdefault("secrets", {})

|

||||||

|

st.session_state["secrets"][key] = value

|

||||||

|

|

||||||

|

|

||||||

|

def _get_secret(key: str, default: str = "") -> str:

|

||||||

|

return st.session_state.get("secrets", {}).get(key, default)

|

||||||

|

|

||||||

|

|

||||||

|

def render_sidebar(env_defaults: Dict[str, Optional[str]], openai_notices: Tuple[str, ...]) -> Dict[str, object]:

|

||||||

|

"""Render all sidebar controls and return configuration."""

|

||||||

|

with st.sidebar:

|

||||||

|

st.header("Tune Your Radar", anchor=False)

|

||||||

|

brand = st.text_input("Brand Name*", value=st.session_state.get("brand_input", ""))

|

||||||

|

if brand:

|

||||||

|

st.session_state["brand_input"] = brand

|

||||||

|

|

||||||

|

date_range = st.selectbox(

|

||||||

|

"Date Range",

|

||||||

|

options=list(DATE_RANGE_LABELS.keys()),

|

||||||

|

format_func=lambda key: DATE_RANGE_LABELS[key],

|

||||||

|

index=1,

|

||||||

|

)

|

||||||

|

min_reddit_upvotes = st.number_input(

|

||||||

|

"Minimum Reddit upvotes",

|

||||||

|

min_value=0,

|

||||||

|

value=st.session_state.get("min_reddit_upvotes", 4),

|

||||||

|

)

|

||||||

|

st.session_state["min_reddit_upvotes"] = min_reddit_upvotes

|

||||||

|

min_twitter_likes = st.number_input(

|

||||||

|

"Minimum X likes",

|

||||||

|

min_value=0,

|

||||||

|

value=st.session_state.get("min_twitter_likes", 100),

|

||||||

|

)

|

||||||

|

st.session_state["min_twitter_likes"] = min_twitter_likes

|

||||||

|

language = st.selectbox(

|

||||||

|

"Language",

|

||||||

|

options=list(SUPPORTED_LANGUAGES.keys()),

|

||||||

|

format_func=lambda key: SUPPORTED_LANGUAGES[key],

|

||||||

|

index=0,

|

||||||

|

)

|

||||||

|

|

||||||

|

st.markdown("### Sources")

|

||||||

|

reddit_enabled = st.toggle("🔺 Reddit", value=st.session_state.get("reddit_enabled", True))

|

||||||

|

twitter_enabled = st.toggle("✖️ Twitter", value=st.session_state.get("twitter_enabled", True))

|

||||||

|

trustpilot_enabled = st.toggle("⭐ Trustpilot", value=st.session_state.get("trustpilot_enabled", True))

|

||||||

|

st.session_state["reddit_enabled"] = reddit_enabled

|

||||||

|

st.session_state["twitter_enabled"] = twitter_enabled

|

||||||

|

st.session_state["trustpilot_enabled"] = trustpilot_enabled

|

||||||

|

|

||||||

|

st.markdown("### API Keys")

|

||||||

|

openai_key_default = env_defaults.get("OPENAI_API_KEY") or _get_secret("OPENAI_API_KEY")

|

||||||

|

openai_key = st.text_input("OpenAI API Key", value=openai_key_default or "", type="password", help="Stored only in this session.")

|

||||||

|

_store_secret("OPENAI_API_KEY", openai_key.strip())

|

||||||

|

reddit_client_id = st.text_input("Reddit Client ID", value=env_defaults.get("REDDIT_CLIENT_ID") or _get_secret("REDDIT_CLIENT_ID"), type="password")

|

||||||

|

reddit_client_secret = st.text_input("Reddit Client Secret", value=env_defaults.get("REDDIT_CLIENT_SECRET") or _get_secret("REDDIT_CLIENT_SECRET"), type="password")

|

||||||

|

reddit_user_agent = st.text_input("Reddit User Agent", value=env_defaults.get("REDDIT_USER_AGENT") or _get_secret("REDDIT_USER_AGENT"))

|

||||||

|

twitter_bearer_token = st.text_input("Twitter Bearer Token", value=env_defaults.get("TWITTER_BEARER_TOKEN") or _get_secret("TWITTER_BEARER_TOKEN"), type="password")

|

||||||

|

_store_secret("REDDIT_CLIENT_ID", reddit_client_id.strip())

|

||||||

|

_store_secret("REDDIT_CLIENT_SECRET", reddit_client_secret.strip())

|

||||||

|

_store_secret("REDDIT_USER_AGENT", reddit_user_agent.strip())

|

||||||

|

_store_secret("TWITTER_BEARER_TOKEN", twitter_bearer_token.strip())

|

||||||

|

|

||||||

|

if openai_notices:

|

||||||

|

for notice in openai_notices:

|

||||||

|

st.info(notice)

|

||||||

|

|

||||||

|

with st.expander("Advanced Options", expanded=False):

|

||||||

|

reddit_limit = st.slider("Reddit results", min_value=10, max_value=100, value=st.session_state.get("reddit_limit", 40), step=5)

|

||||||

|

twitter_limit = st.slider("Twitter results", min_value=10, max_value=100, value=st.session_state.get("twitter_limit", 40), step=5)

|

||||||

|

trustpilot_limit = st.slider("Trustpilot results", min_value=10, max_value=60, value=st.session_state.get("trustpilot_limit", 30), step=5)

|

||||||

|

llm_batch_size = st.slider("OpenAI batch size", min_value=5, max_value=20, value=st.session_state.get("llm_batch_size", 20), step=5)

|

||||||

|

st.session_state["reddit_limit"] = reddit_limit

|

||||||

|

st.session_state["twitter_limit"] = twitter_limit

|

||||||

|

st.session_state["trustpilot_limit"] = trustpilot_limit

|

||||||

|

st.session_state["llm_batch_size"] = llm_batch_size

|

||||||

|

|

||||||

|

return {

|

||||||

|

"brand": brand.strip(),

|

||||||

|

"date_range": date_range,

|

||||||

|

"min_reddit_upvotes": min_reddit_upvotes,

|

||||||

|

"min_twitter_likes": min_twitter_likes,

|

||||||

|

"language": language,

|

||||||

|

"sources": {

|

||||||

|

"reddit": reddit_enabled,

|

||||||

|

"twitter": twitter_enabled,

|

||||||

|

"trustpilot": trustpilot_enabled,

|

||||||

|

},

|

||||||

|

"limits": {

|

||||||

|

"reddit": reddit_limit,

|

||||||

|

"twitter": twitter_limit,

|

||||||

|

"trustpilot": trustpilot_limit,

|

||||||

|

},

|

||||||

|

"batch_size": llm_batch_size,

|

||||||

|

"credentials": {

|

||||||

|

"openai": openai_key.strip(),

|

||||||

|

"reddit": {

|

||||||

|

"client_id": reddit_client_id.strip(),

|

||||||

|

"client_secret": reddit_client_secret.strip(),

|

||||||

|

"user_agent": reddit_user_agent.strip(),

|

||||||

|

},

|

||||||

|

"twitter": twitter_bearer_token.strip(),

|

||||||

|

},

|

||||||

|

}

|

||||||

@@ -0,0 +1,25 @@

|

|||||||

|

"""Loading indicators and status helpers."""

|

||||||

|

|

||||||

|

from __future__ import annotations

|

||||||

|

|

||||||

|

from contextlib import contextmanager

|

||||||

|

from typing import Iterator

|

||||||

|

|

||||||

|

import streamlit as st

|

||||||

|

|

||||||

|

|

||||||

|

@contextmanager

|

||||||

|

def source_status(label: str) -> Iterator[st.delta_generator.DeltaGenerator]:

|

||||||

|

"""Context manager that yields a status widget for source fetching."""

|

||||||

|

status = st.status(label, expanded=True)

|

||||||

|

try:

|

||||||

|

yield status

|

||||||

|

status.update(label=f"{label} ✅", state="complete")

|

||||||

|

except Exception as exc: # noqa: BLE001

|

||||||

|

status.update(label=f"{label} ⚠️ {exc}", state="error")

|

||||||

|

raise

|

||||||

|

|

||||||

|

|

||||||

|

def show_empty_state(message: str) -> None:

|

||||||

|

"""Render a friendly empty-state callout."""

|

||||||

|

st.info(message, icon="🔎")

|

||||||

@@ -0,0 +1,23 @@

|

|||||||

|

"""Executive summary display components."""

|

||||||

|

|

||||||

|

from __future__ import annotations

|

||||||

|

|

||||||

|

from typing import Dict, Optional

|

||||||

|

|

||||||

|

import streamlit as st

|

||||||

|

|

||||||

|

|

||||||

|

def render_summary(summary: Optional[Dict[str, str]]) -> None:

|

||||||

|

"""Render executive summary card."""

|

||||||

|

st.subheader("Executive Summary", anchor=False)

|

||||||

|

if not summary:

|

||||||

|

st.warning("Executive summary disabled. Provide an OpenAI API key to unlock this section.", icon="🤖")

|

||||||

|

return

|

||||||

|

st.markdown(

|

||||||

|

"""

|

||||||

|

<div style="padding:1rem;border:1px solid #eee;border-radius:0.75rem;background-color:#f9fafb;">

|

||||||

|

""",

|

||||||

|

unsafe_allow_html=True,

|

||||||

|

)

|

||||||

|

st.markdown(summary.get("raw", ""))

|

||||||

|

st.markdown("</div>", unsafe_allow_html=True)

|

||||||

16

community-contributions/Reputation_Radar/requirements.txt

Normal file

16

community-contributions/Reputation_Radar/requirements.txt

Normal file

@@ -0,0 +1,16 @@

|

|||||||

|

streamlit

|

||||||

|

praw

|

||||||

|

requests

|

||||||

|

beautifulsoup4

|

||||||

|

pandas

|

||||||

|

python-dotenv

|

||||||

|

tenacity

|

||||||

|

plotly

|

||||||

|

openai>=1.0.0

|

||||||

|

vaderSentiment

|

||||||

|

fuzzywuzzy[speedup]

|

||||||

|

python-Levenshtein

|

||||||

|

reportlab

|

||||||

|

tqdm

|

||||||

|

pytest

|

||||||

|

XlsxWriter

|

||||||

@@ -0,0 +1,20 @@

|

|||||||

|

[

|

||||||

|

{

|

||||||

|

"source": "reddit",

|

||||||

|

"id": "t3_sample1",

|

||||||

|

"url": "https://www.reddit.com/r/technology/comments/sample1",

|

||||||

|

"author": "techfan42",

|

||||||

|

"timestamp": "2025-01-15T14:30:00+00:00",

|

||||||

|

"text": "ReputationRadar did an impressive job resolving our customer issues within hours. Support has been world class!",

|

||||||

|

"meta": {"score": 128, "num_comments": 24, "subreddit": "technology", "type": "submission"}

|

||||||

|

},

|

||||||

|

{

|

||||||

|

"source": "reddit",

|

||||||

|

"id": "t1_sample2",

|

||||||

|

"url": "https://www.reddit.com/r/startups/comments/sample2/comment/sample",

|

||||||

|

"author": "growthguru",

|

||||||

|

"timestamp": "2025-01-14T10:10:00+00:00",

|

||||||

|

"text": "Noticed a spike in downtime alerts with ReputationRadar this week. Anyone else seeing false positives?",

|

||||||

|

"meta": {"score": 45, "subreddit": "startups", "type": "comment", "submission_title": "Monitoring tools"}

|

||||||

|

}

|

||||||

|

]

|

||||||

@@ -0,0 +1,20 @@

|

|||||||

|

[

|

||||||

|

{

|

||||||

|

"source": "trustpilot",

|

||||||

|

"id": "trustpilot-001",

|

||||||

|

"url": "https://www.trustpilot.com/review/reputationradar.ai",

|

||||||

|

"author": "Dana",

|

||||||

|

"timestamp": "2025-01-12T11:00:00+00:00",

|

||||||

|

"text": "ReputationRadar has simplified our weekly reporting. The sentiment breakdowns are easy to understand and accurate.",

|

||||||

|

"meta": {"rating": "5 stars"}

|

||||||

|

},

|

||||||

|

{

|

||||||

|

"source": "trustpilot",

|

||||||

|

"id": "trustpilot-002",

|

||||||

|

"url": "https://www.trustpilot.com/review/reputationradar.ai?page=2",

|

||||||

|

"author": "Liam",

|

||||||

|

"timestamp": "2025-01-10T18:20:00+00:00",

|

||||||

|

"text": "Support was responsive, but the Trustpilot integration kept timing out. Hoping for a fix soon.",

|

||||||

|

"meta": {"rating": "3 stars"}

|

||||||

|

}

|

||||||

|

]

|

||||||

@@ -0,0 +1,20 @@

|

|||||||

|

[

|

||||||

|

{

|

||||||

|

"source": "twitter",

|

||||||

|

"id": "173654001",

|

||||||

|

"url": "https://twitter.com/brandlover/status/173654001",

|

||||||

|

"author": "brandlover",

|

||||||

|

"timestamp": "2025-01-15T16:45:00+00:00",

|

||||||

|

"text": "Huge shoutout to ReputationRadar for flagging sentiment risks ahead of our launch. Saved us hours this morning!",

|

||||||

|

"meta": {"likes": 57, "retweets": 8, "replies": 3, "quote_count": 2}

|

||||||

|

},

|

||||||

|

{

|

||||||

|

"source": "twitter",

|

||||||

|

"id": "173653991",

|

||||||

|

"url": "https://twitter.com/critique/status/173653991",

|

||||||

|

"author": "critique",

|

||||||

|

"timestamp": "2025-01-13T09:12:00+00:00",

|

||||||

|

"text": "The new ReputationRadar dashboard feels laggy and the PDF export failed twice. Dev team please check your rollout.",

|

||||||

|

"meta": {"likes": 14, "retweets": 1, "replies": 5, "quote_count": 0}

|

||||||

|

}

|

||||||

|

]

|

||||||

@@ -0,0 +1,11 @@

|

|||||||

|

"""Service layer exports for ReputationRadar."""

|

||||||

|

|

||||||

|

from . import llm, reddit_client, trustpilot_scraper, twitter_client, utils

|

||||||

|

|

||||||

|

__all__ = [

|

||||||

|

"llm",

|

||||||

|

"reddit_client",

|

||||||

|

"trustpilot_scraper",

|

||||||

|

"twitter_client",

|

||||||

|

"utils",

|

||||||

|

]

|

||||||

147

community-contributions/Reputation_Radar/services/llm.py

Normal file

147

community-contributions/Reputation_Radar/services/llm.py

Normal file

@@ -0,0 +1,147 @@

|

|||||||

|

"""LLM sentiment analysis and summarization utilities."""

|

||||||

|

|

||||||

|

from __future__ import annotations

|

||||||

|

|

||||||

|

import json

|

||||||

|

import logging

|

||||||

|

from dataclasses import dataclass

|

||||||

|

from typing import Any, Dict, Iterable, List, Optional, Sequence

|

||||||

|

|

||||||

|

try: # pragma: no cover - optional dependency

|

||||||

|

from openai import OpenAI

|

||||||

|

except ModuleNotFoundError: # pragma: no cover

|

||||||

|

OpenAI = None # type: ignore[assignment]

|

||||||

|

|

||||||

|

from vaderSentiment.vaderSentiment import SentimentIntensityAnalyzer

|

||||||

|

|

||||||

|

from .utils import ServiceWarning, chunked

|

||||||

|

|

||||||

|

CLASSIFICATION_SYSTEM_PROMPT = "You are a precise brand-sentiment classifier. Output JSON only."

|

||||||

|

SUMMARY_SYSTEM_PROMPT = "You analyze brand chatter and produce concise, executive-ready summaries."

|

||||||

|

|

||||||

|

|

||||||

|

@dataclass

|

||||||

|

class SentimentResult:

|

||||||

|

"""Structured sentiment output."""

|

||||||

|

|

||||||

|

label: str

|

||||||

|

confidence: float

|

||||||

|

|

||||||

|

|

||||||

|

class LLMService:

|

||||||

|

"""Wrapper around OpenAI with VADER fallback."""

|

||||||

|

|

||||||

|

def __init__(self, api_key: Optional[str], model: str = "gpt-4o-mini", batch_size: int = 20):

|

||||||

|

self.batch_size = max(1, batch_size)

|

||||||

|

self.model = model

|

||||||

|

self.logger = logging.getLogger("services.llm")

|

||||||

|

self._client: Optional[Any] = None

|

||||||

|

self._analyzer = SentimentIntensityAnalyzer()

|

||||||

|

if api_key and OpenAI is not None:

|

||||||

|

try:

|

||||||

|

self._client = OpenAI(api_key=api_key)

|

||||||

|

except Exception as exc: # noqa: BLE001

|

||||||

|

self.logger.warning("Failed to initialize OpenAI client, using VADER fallback: %s", exc)

|

||||||

|

self._client = None

|

||||||

|

elif api_key and OpenAI is None:

|

||||||

|

self.logger.warning("openai package not installed; falling back to VADER despite API key.")

|

||||||

|

|

||||||

|

def available(self) -> bool:

|

||||||

|

"""Return whether OpenAI-backed features are available."""

|

||||||

|

return self._client is not None

|

||||||

|

|

||||||

|

def classify_sentiment_batch(self, texts: Sequence[str]) -> List[SentimentResult]:

|

||||||

|

"""Classify multiple texts, chunking if necessary."""

|

||||||

|

if not texts:

|

||||||

|

return []

|

||||||

|

if not self.available():

|

||||||

|

return [self._vader_sentiment(text) for text in texts]

|

||||||

|

|

||||||

|

results: List[SentimentResult] = []

|

||||||

|

for chunk in chunked(list(texts), self.batch_size):

|

||||||

|

prompt_lines = ["Classify each item as \"positive\", \"neutral\", or \"negative\".", "Also output a confidence score between 0 and 1.", "Return an array of objects: [{\"label\": \"...\", \"confidence\": 0.0}].", "Items:"]

|

||||||

|

prompt_lines.extend([f"{idx + 1}) {text}" for idx, text in enumerate(chunk)])

|

||||||

|

prompt = "\n".join(prompt_lines)

|

||||||

|

try:

|

||||||

|

response = self._client.responses.create( # type: ignore[union-attr]

|

||||||

|

model=self.model,

|

||||||

|

input=[

|

||||||

|

{"role": "system", "content": CLASSIFICATION_SYSTEM_PROMPT},

|

||||||

|

{"role": "user", "content": prompt},

|

||||||

|

],

|

||||||

|

temperature=0,

|

||||||

|

max_output_tokens=500,

|

||||||

|

)

|

||||||

|

output_text = self._extract_text(response)

|

||||||

|

parsed = json.loads(output_text)

|

||||||

|

for item in parsed:

|

||||||

|

results.append(

|

||||||

|

SentimentResult(

|

||||||

|

label=item.get("label", "neutral"),

|

||||||

|

confidence=float(item.get("confidence", 0.5)),

|

||||||

|

)

|

||||||

|

)

|

||||||

|

except Exception as exc: # noqa: BLE001

|

||||||

|

self.logger.warning("Classification fallback to VADER due to error: %s", exc)

|

||||||

|

for text in chunk:

|

||||||

|

results.append(self._vader_sentiment(text))

|

||||||

|

# Ensure the output length matches input

|

||||||

|

if len(results) != len(texts):

|

||||||

|

# align by padding with neutral

|

||||||

|

results.extend([SentimentResult(label="neutral", confidence=0.33)] * (len(texts) - len(results)))

|

||||||

|

return results

|

||||||

|

|

||||||

|

def summarize_overall(self, findings: List[Dict[str, Any]]) -> Dict[str, Any]:

|

||||||

|

"""Create an executive summary using OpenAI."""

|

||||||

|

if not self.available():

|

||||||

|

raise ServiceWarning("OpenAI API key missing. Summary unavailable.")

|

||||||

|

prompt_lines = [

|

||||||

|

"Given these labeled items and their short rationales, write:",

|

||||||

|

"- 5 bullet \"Highlights\"",

|

||||||

|

"- 5 bullet \"Risks & Concerns\"",

|

||||||

|

"- One-line \"Overall Tone\" (Positive/Neutral/Negative with brief justification)",

|

||||||

|

"- 3 \"Recommended Actions\"",

|

||||||

|

"Keep it under 180 words total. Be specific but neutral in tone.",

|

||||||

|

"Items:",

|

||||||

|

]

|

||||||

|

for idx, item in enumerate(findings, start=1):

|

||||||

|

prompt_lines.append(

|

||||||

|

f"{idx}) [{item.get('label','neutral').upper()}] {item.get('text','')}"

|

||||||

|

)

|

||||||

|

prompt = "\n".join(prompt_lines)

|

||||||

|

try:

|

||||||

|

response = self._client.responses.create( # type: ignore[union-attr]

|

||||||

|

model=self.model,

|

||||||

|

input=[

|

||||||

|

{"role": "system", "content": SUMMARY_SYSTEM_PROMPT},

|

||||||

|

{"role": "user", "content": prompt},

|

||||||

|

],

|

||||||

|

temperature=0.2,

|

||||||

|

max_output_tokens=800,

|

||||||

|

)

|

||||||

|

output_text = self._extract_text(response)

|

||||||

|

return {"raw": output_text}

|

||||||

|

except Exception as exc: # noqa: BLE001

|

||||||

|

self.logger.error("Failed to generate summary: %s", exc)

|

||||||

|

raise ServiceWarning("Unable to generate executive summary at this time.") from exc

|

||||||

|

|

||||||

|

def _vader_sentiment(self, text: str) -> SentimentResult:

|

||||||

|

scores = self._analyzer.polarity_scores(text)

|

||||||

|

compound = scores["compound"]

|

||||||

|

if compound >= 0.2:

|

||||||

|

label = "positive"

|

||||||

|

elif compound <= -0.2:

|

||||||

|

label = "negative"

|

||||||

|

else:

|

||||||

|

label = "neutral"

|

||||||

|